Timnit Gebru is shaping the future of tomorrow.

View this post on Instagram

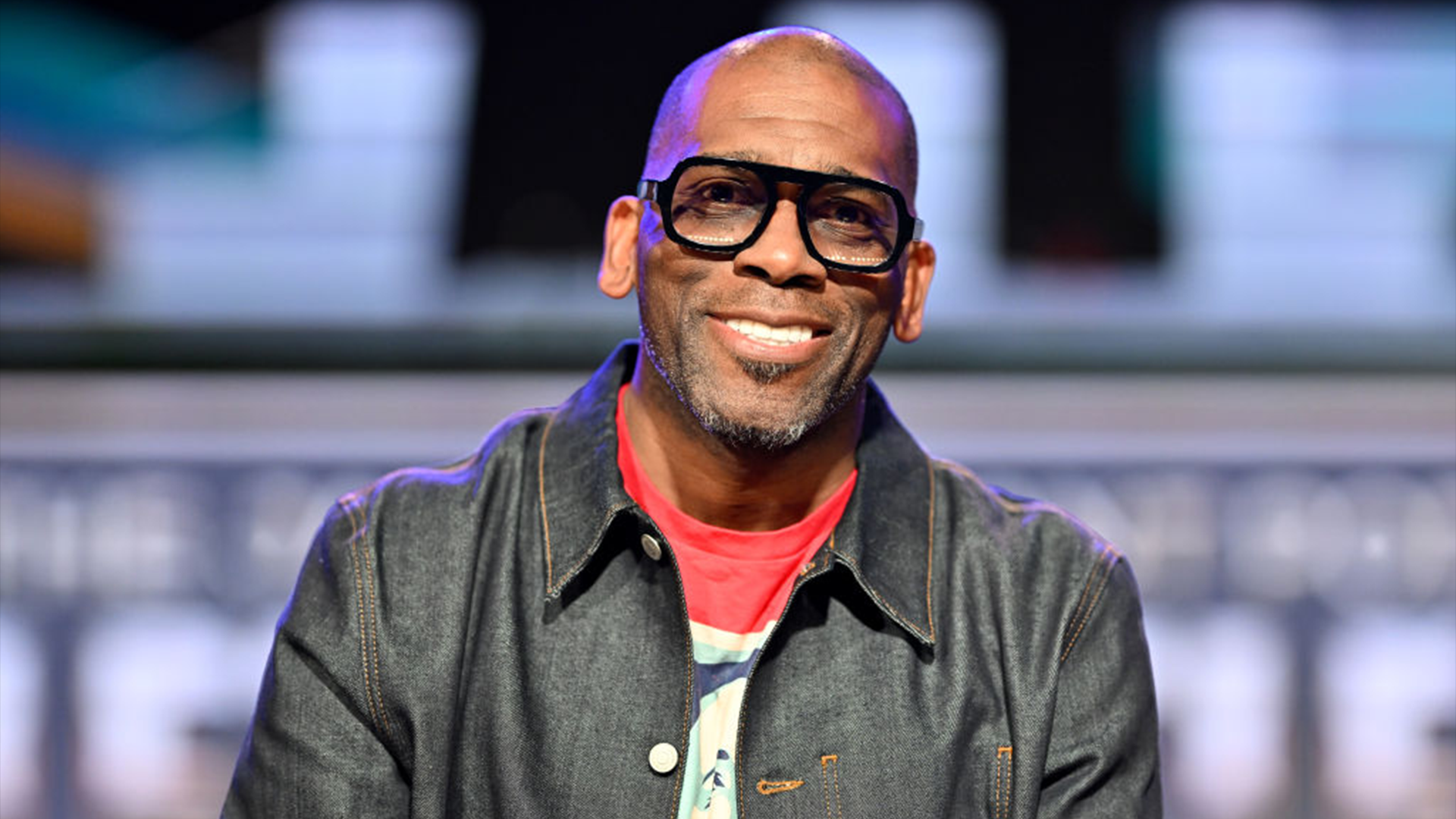

Non-Linear Career Journey

Gebru has always loved math, science, and music. She attended Stanford University from 2008 to 2017, earning a bachelor’s, master’s, and Ph.D. in electrical engineering—the same field her father worked in. She combined her interests to launch her career at Apple, first as an audio hardware intern in 2004. A year later, she was hired as an audio systems engineer and worked part-time as an audio engineer from 2007 until her departure four years later.

Gebru’s professional journey has not been linear. In 2011, she co-founded Motion Think, a company that leveraged design thinking to create solutions for small businesses. While pursuing her Ph.D. at Stanford, she co-founded and became president of Black in AI after attending one of the largest academic conferences in 2016. At that time, she realized that Black professionals were significantly underrepresented.

“The following year, we had a workshop where Black people from around the world came. We provided funding. We raised money, and they could present their work and meet each other. And we had 300 Black people at the conference. So we jumped from five to 300, and then after that, we created a forum where people could ask each other questions and post job opportunities, etc. We were able to have fee waivers for students who are applying to graduate school programs,” she told AFROTECH™.

Furthermore, Gebru worked as a postdoctoral researcher at Microsoft in New York before joining Google in 2018 as co-lead of its Ethical AI Research Team. As AFROTECH™ previously reported, Gebru was the only Black woman researcher at the time of her appointment to Google’s Ethical AI Research Team. With the help of her co-lead, Margaret Mitchell, she championed diversity and was intentional about establishing a “safe space” for people from all marginalized backgrounds, including queer individuals, Black individuals, and Latinos.

“We had to fight battles with respect to discrimination in the workplace. We had to fight battles with respect to our voices being heard or our team being taken seriously, or trying to steer the company in a direction where it is not going heads down on creating harmful AI systems,” she explained to us.

Fired By Google

In 2020, Google fired Gebru. She says this resulted from a research paper she had written on the dangers of large language models, the underlying technology behind what would later form platforms like ChatGPT (launched in 2022), and other similar systems.

“We saw this race to create larger and larger models, and these were primarily harming marginalized communities,” Gebru said. “It takes a lot of environmental resources and computing power to train and evaluate these models and run them. Because of environmental racism, the people who bear the burden and the costs are not the same as the people who benefit from these systems. Then, when you look at the views that are propagated through these systems, they’re hegemonic views, and, of course, racist, sexist, and all perpetuating all of the ‘isms.’ So, we wrote this paper, and we were told that we had to take our name off of this paper and/or withdraw it. I was willing to do it as long as we had a discussion on what kind of research we could do and what kind of processes we needed to follow because we followed all of the processes we knew in the company. Then I was fired, and they said that I had resigned.”

Distributed AI Research Institute

After she was fired, Gebru received the backing of other company members and letters from Congress. She regrouped and launched the Distributed AI Research Institute (DAIR), which is continuing the research that was frowned upon. It is built around the premise that while AI will be ever-present, its harms can be mitigated through thoughtful deployment, responsible use, and the inclusion of diverse perspectives, according to information on the organization’s LinkedIn.

DAIR works with computer scientists and engineers, but it goes a step further by working with individuals who have been directly impacted by AI’s harmful side.

“When OpenAI was first founded in 2015, they were touted as saviors of the world trying to do beneficial AI to humanity or something like that. And you see the people involved, it’s billionaires like Elon Musk [founder of Tesla], Peter Thiel [PayPal CEO], and a bunch of white guys based out of San Francisco who have no interest in benefiting any kind of society,” she said. “So, I was very irritated by the uncritical take and how they were being described.”

DAIR’s team includes individuals like Adrienne Williams, a former Amazon delivery driver, who also worked as a junior high teacher for a tech-owned charter school. Some of her observations from those roles included billionaires who were allegedly experimenting on her students through personalized education and workers being surveilled and controlled by algorithmic management.

As a result, she has been organizing since 2018 and has worked alongside activists, politicians, and researchers, in hopes of creating better outcomes between the intersections of tech, labor, and education industries, according to information on DAIR’s website.

“So, when I created DAIR, I wanted to make sure that the institute’s work was shaped not just by computer scientists and engineers but people who have lived experience with respect to some of the harms that have been perpetuated in their lives through technology and how to stop those harms — whether it is through surveillance or worker exploitation,” Gebru stated.

AFROTECH™ Future 50

Gebru’s contributions to her field landed her among AFROTECH™’s 2025 Legacy Leaders in the Future Maker category. Our Legacy Leaders have already set the standard for impact, vision, and excellence for the Future 50. Other categories include Dynamic Investors, Visionary Founders, Corporate Catalysts, and Changemakers.

View this post on Instagram

If you’re building something game-changing, pushing the industry forward, or breaking down barriers for the next generation, we want to hear how you are doing similar work as Gebru.

“Build a network. You can’t just be a sacrificial lamb on your own. So everybody plays a role. When I was fired from Google, I had a lot of people backing me up inside and out because I took the time to build that network,” Gebru expressed as a piece of advice for Future Makers. “So, in some ways, it’s like if there’s no table, build a table. You don’t have to boil the ocean. Start small. Start local. Start with your classmates, your neighbors, or whoever is around you, and try to build the environment that you wanted for yourself that didn’t exist for you. And I think that will help you change things rather than trying to do things alone.”

Submit to be considered. The deadline is April 11, 2025.