On Feb. 12, 2021, the Minneapolis Police Department announced that its officers are banned from using facial recognition software when they’re in the process of apprehending suspects.

According to TechCrunch, the problematic police department — best known for being the home of the officers who killed George Floyd last summer — is known for having a “relationship” with Clearview AI, a firm with a record of “scraping” images from social media networks and selling them, wholesale, to police departments and federal law enforcement agencies.

13 members of the city council — with no opposition — voted in favor of banning facial recognition software usage. And Minneapolis is just the latest city to join in the usage ban, joining Boston and San Francisco in this landmark move.

However, the bans haven’t included selling the images to private companies — which many privacy experts cite as a growing concern.

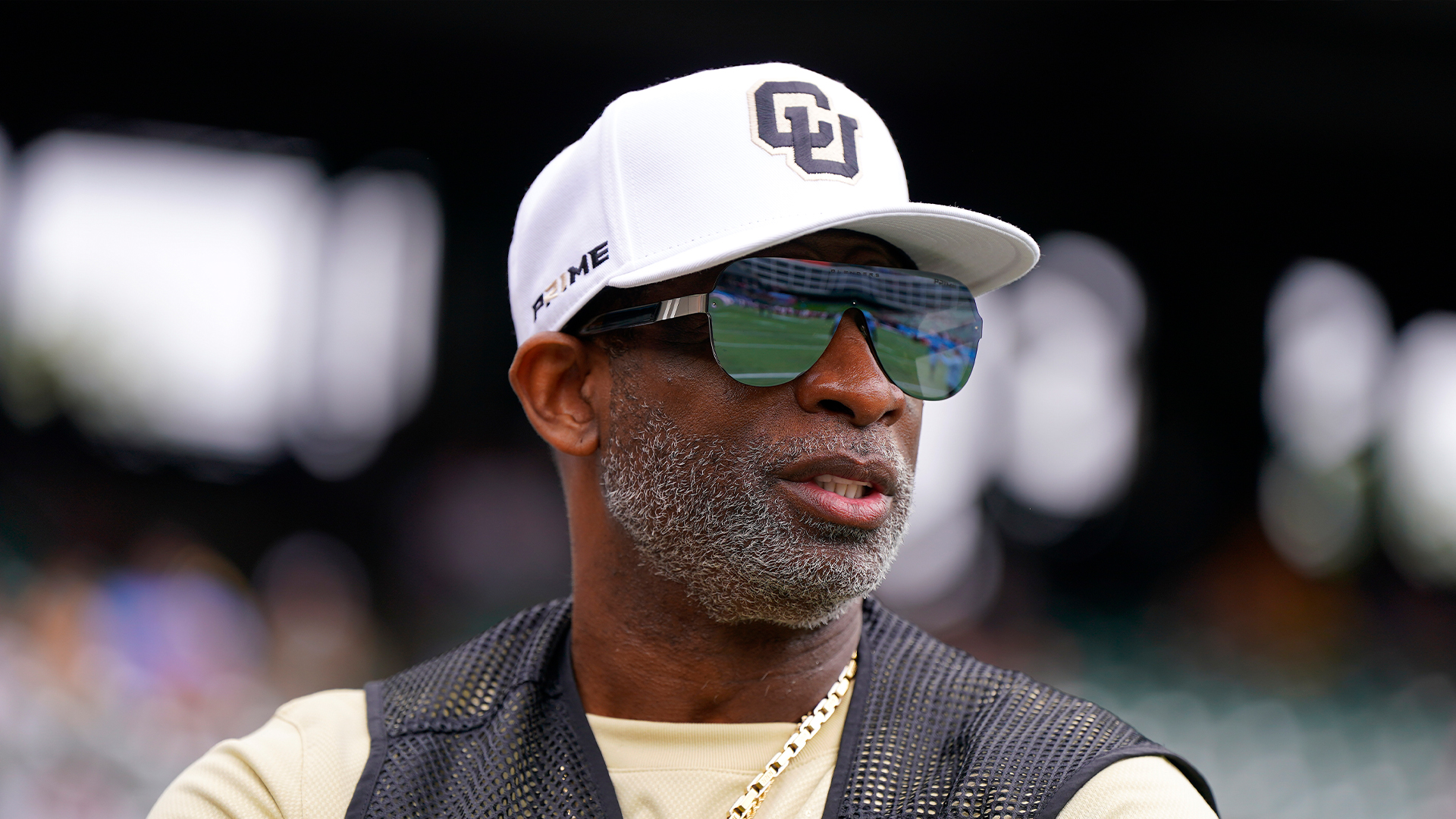

But there’s another, more salient reason why facial recognition software is facing staunch opposition and even outright banning: because of increasingly obvious concerns about aggressive policing. According to the ACLU, facial recognition software is dangerous to all people, of all races — but in the United States, there’s an increasing concern of how this software could be deadly for Black Americans, who could be targeted and even killed merely for existing.

“Groundbreaking research conducted by Black scholars Joy Buolamwini, Deb Raji, and Timnit Gebru snapped our collective attention to the fact that yes, algorithms can be racist,” reports the ACLU. “Buolamwini and Gebru’s 2018 research concluded that some facial analysis algorithms misclassified Black women nearly 35 percent of the time, while nearly always getting it right for white men. A subsequent study by Buolamwini and Raji at the Massachusetts Institute of Technology confirmed these problems persisted with Amazon’s software.”

But even if the algorithms are somehow adjusted to level the playing field, the use of the technology itself is racist — because the system itself is racist.

“Black people face overwhelming disparities at every single stage of the criminal punishment system, from street-level surveillance and profiling all the way through to sentencing and conditions of confinement,” the ACLU notes. “Surveillance of Black people in the U.S. has a pernicious and largely unaddressed history, beginning during the antebellum era.”